|

I am a recent PhD graduate from the Robotics Institute at Carnegie Mellon Univeristy. During my PhD I worked on assistive human robot-interaction with my advisers Henny Admoni and Kris Kitani. While completing my PhD I had the good fortune to work with a lot of wonderful people. I funded in part by the NSF GRFP. Additionally, I was selected to participate in the Meta AI Mentorship Program, where I worked with Chris Paxton to explore how foundation models could be used to capture peoples preferences for completing complex household rearrangement tasks. In 2020, I completed an internship at Meta's Reality Labs. Here, I studied the effect of visual and optimal assistance on people as they completed a complex house cleaning task in an XR simulation built in Habitat. Here, I worked with Ruta Desai and Kevin Carlberg. I completed my undergrad at Indiana University, Bloomington in 2016 where I obtained a BS in Computer Science and a BS in Cognitive Science. While there I was fortunate to work with David Crandall, Chen Yu, and Kris Hauser. Email / CV / Google Scholar / Github / LinkedIn |

|

|

I'm interested in how people interact with intelligent agents. In my PhD work, I explored this in the context of assistive home robotics, where I developed several algorithms for seamless value alignment to people's goals during household tasks using naturalistic behaviors. This work has motivated my interests in human-robot interaction, machine learning, computer vision and perception, and reinforcement learning, especially through human feedback. |

|

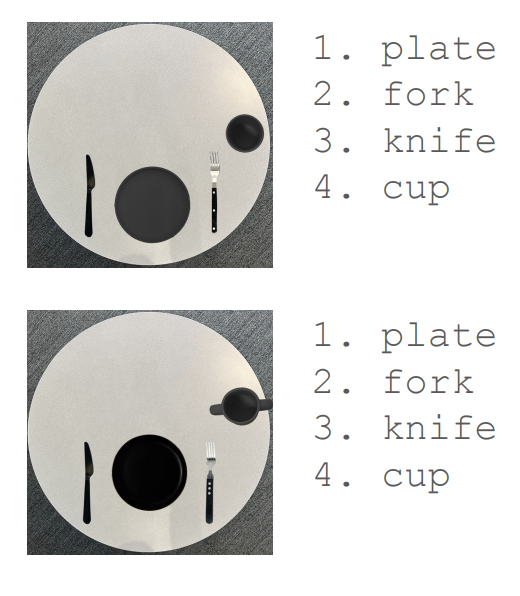

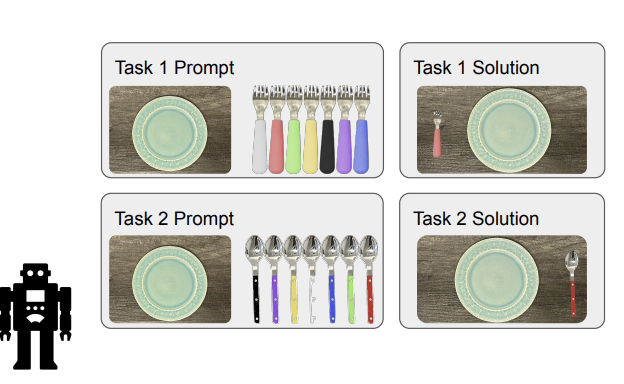

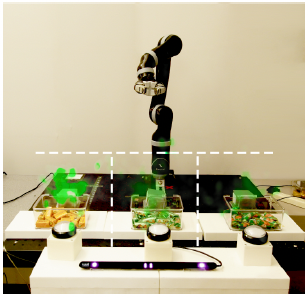

Benjamin A. Newman, Pranay Gupta, Kris Kitani, Yonatan Bisk, Henny Admoni, and Chris Paxton arXiv, 2024 pdf / bibtex We present a VLM based method to solve multi-step household object rearrangement tasks, such as setting a table, according to personal preferences. We compare multiple state of the art VLMs in a simulated setting. We then collect a large dataset of 995 naturalistic table setting demonstrations and evaluate our method on its ability to capture these preferences. |

|

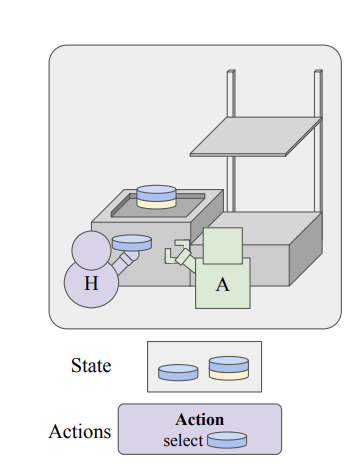

Benjamin A. Newman, Chris Paxton, Kris Kitani, and Henny Admoni AAMAS, 2024 pdf / bibtex We present an algorithm that bootstraps online linear regression problems using large nonlinear models using in-situ naturalistic corrective actions. |

|

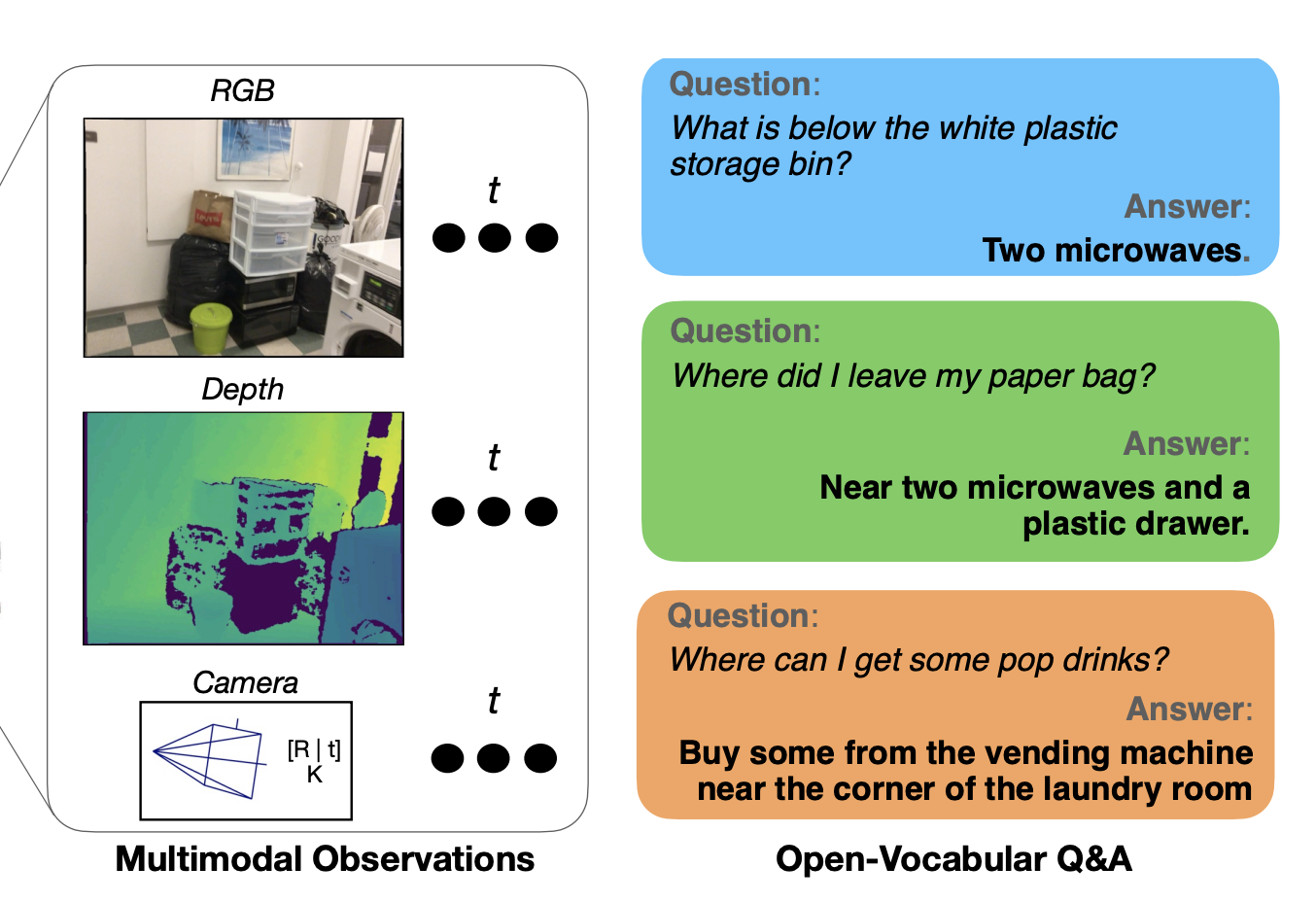

Arjun Majumdar, Anurag Ajay, Xiaohan Zhang, Pranav Putta, Sriram Yenamandra, Mikael Henaff, Sneha Silwal, Paul Mcvay, Oleksandr Maksymets, Sergio Arnaud, Karmesh Yadav, Qiyang Li, Benjamin A. Newman, Mohit Sharma, Vincent Berges, Shiqi Zhang, Pulkit Agrawal, Yonatan Bisk, Dhruv Batra, Mrinal Kalakrishnan, Franziska Meier, Chris Paxton, Alexander Sax, Aravind Rajeswaran CVPR, 2024 pdf / bibtex We present a modern formulation of Embodied Question Answering (EQA) as the task of understanding an environment well enough to answer questions about it in natural language. |

|

Benjamin A. Newman, Pranay Gupta, Kris Kitani, Yonatan Bisk, Henny Admoni, and Chris Paxton Human – Large Language Model Interaction Workshop at HRI, 2024 pdf / bibtex We present a VLM based method to solve object rearrangment problems according to personal preference from prior user demonstrations. |

|

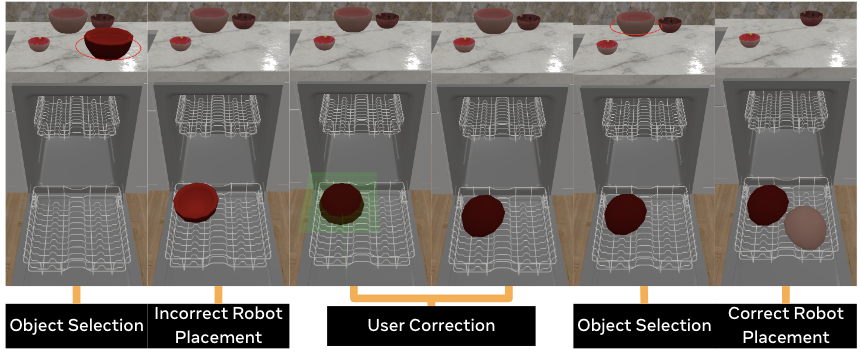

Benjamin A. Newman, Chris Paxton, Kris Kitani, and Henny Admoni Companion of the HRI Proceedings, 2023 pdf / bibtex We present an algorithm for using naturalistic corrections to update a robot model of a user goal in a simulated object rearrangement task. |

|

Benjamin A. Newman, Reuben Aronson, Kris Kitani, and Henny Admoni Frontiers in Robotics and AI, 2022 pdf / bibtex We define assistance as a perspective on human-robot interaction and provide cross-domain design axes that are critical to consider when developing assistive robotics. We support these through a broad review of recent assistive robotics research. |

|

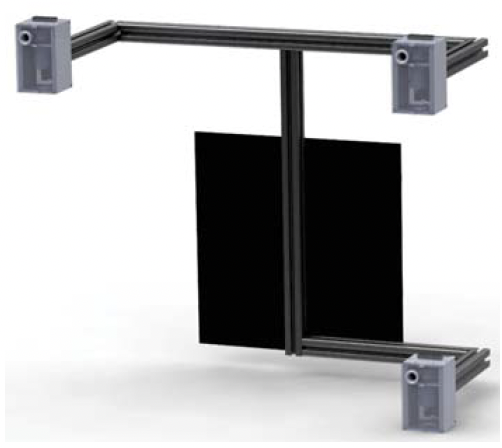

Benjamin A. Newman*, Reuben Aronson*, Kris Kitani, and Henny Admoni IJRR, 2021 pdf / bibtex / Project Page We present a multi-modal dataset of eye gaze, joystick activation, egocentric video, robot motion, and arm electromyography taken during a human-robot co-manipulation task under varying degrees of robotic assistance. * denotes equal contribution |

|

Benjamin A. Newman*, Abhijat Biswas*, Sarthak Ahuja, Siddharth Girdhar, Kris Kitani, and Henny Admoni ICSR, 2020 pdf / bibtex We explore how robot motion that is expressed in advance of an expected phenomenon (e.g. a robot reaching for an object it expects the user will want) affects the eventual decision the person makes. * denotes equal contribution |

|

arXiv, 2020 Benjamin A. Newman, Kevin Carlberg, and Ruta Desai pdf / bibtex We examine how presenting users with optimal routing assistance through a visual display would affect their ability and sense of agency when completing a complex object rearrangement task. |

|

Alexander Baikovitz*, Jonathan Duffy*, Zachary Sussman*, Benjamin A. Newman, and Henny Admoni CHI 2019 Workshop on Hacking Blind Navigation, 2019 pdf / bibtex We develop a portable haptic device that aids visually impaired users navigte in real world environments. * denotes equal contribution |

|

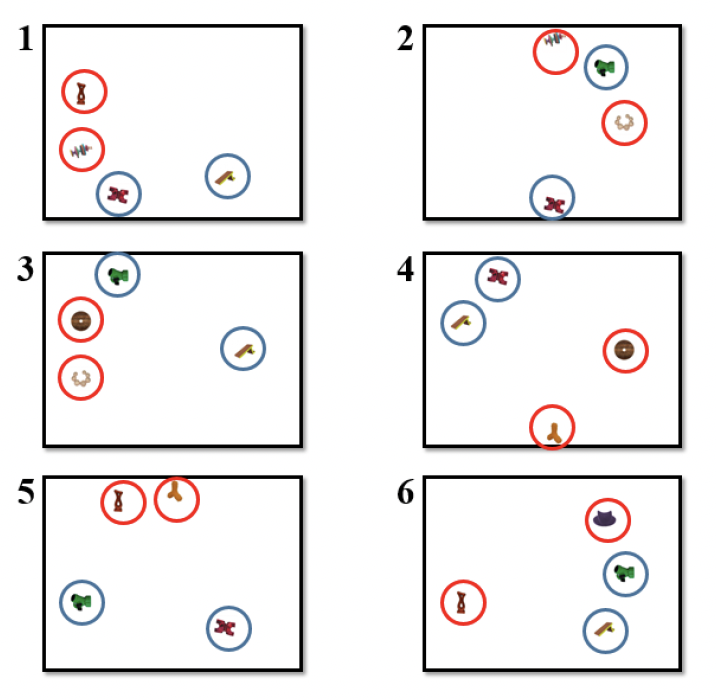

Alexa Romberg, Yayun Zhang, Benjamin A. Newman, Jochen Triesch, and Chen Yu ICDL Epi-Rob, 2016 pdf / bibtex We study how cross-situational statistics drive visual attention. Specifically, we examine how attention differs towards objects that are displayed infrequently versus those that are displayed frequently. |

|

|

|

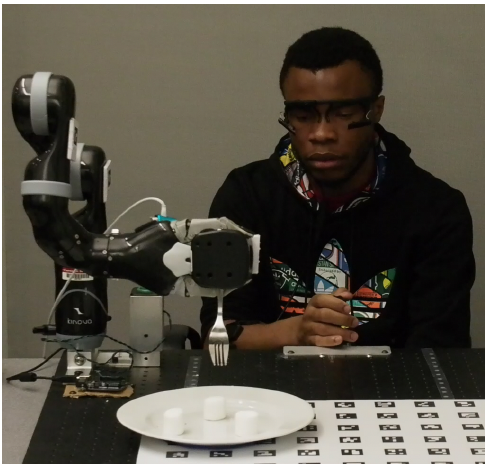

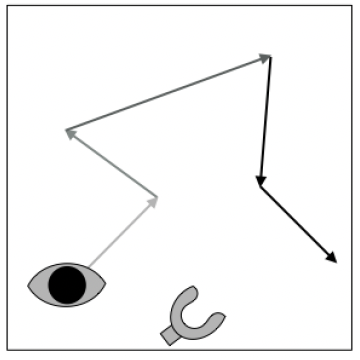

Benjamin A. Newman, Kris Kitani, and Henny Admoni We attempt to discover joint hand and eye gaze primitives for human robot co-manipulataion in an assisted eating task that could be useful for user goal recognition. |

|

Thank you Jon Barron for creating and open-sourcing a fantastic website! |