Requirements Documentation

DISTRIBUTED VERSIONING SYSTEM

VOLVOX

URL: http://www.cmu.edu/volvox

By:

Rahul Raheja

Adam Goldhammer

Karthik Krishnan

Meghal Gosalia

Contents

Quality

Attributes/ Functional Requirements

Architecture

(diagrams using ACME Architecture Description Language - ADL)

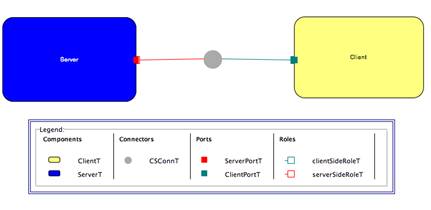

Communication Architecture: Client Server

(Request Response Type)

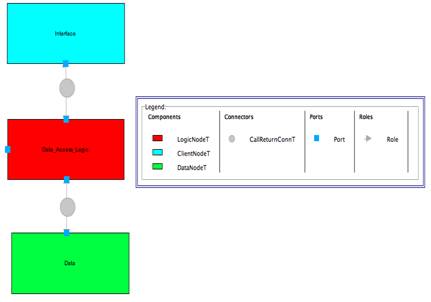

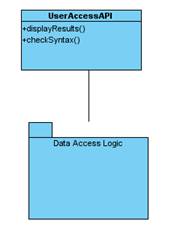

Node Architecture: Tiered Architecture (Call

Return Type)

Code

Organization - Class Diagram

Interactions

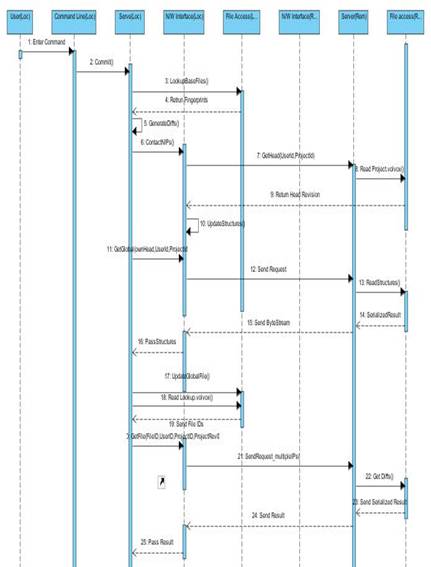

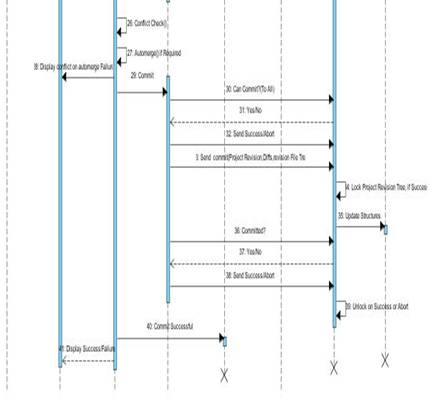

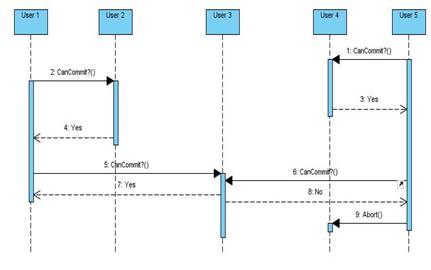

& Communication Protocol - Sequence Diagrams

Abstract

Most current versioning systems rely on a central server to store the project, from which user’s checkout and check in files. This leaves a single point of failure in the network, and costs for hosting the files. A distributed approach would be cheaper, by using the local disks of the users for storage, as well as more failure tolerant; no one node can fail and bring down the entire system. Even with these advantages, current distributed versioning systems still try and force users into a linear workflow; users are expected to fold changes back in each commit. Volvox versioning system provides distributed file storage and nondestructive editing designed to be used in networks that are prone to fragmentation, allowing for a more streamlined workflow than other distributed versioning projects while still offering the advantages. Volvox will allow users to commit files to the repository even if they are operating with only one other visible node, and will attempt to automatically resolve conflicts during a commit.

Project Description

Revision control is the management of multiple versions of a file and is commonly used in collaborative projects. Manually managing files is difficult when multiple people are working on the same project, making automated systems very helpful in tracking changes, recovering old versions, and synchronizing users.

There are many popular version control systems, such as Subversion and Perforce. However, these systems are based on a centralized server access system. Users contact a designated node each time they need to check in or out a file. This kind of architecture makes the server as a single point of failure. For example, if the server gets compromised, or fails due to some fault, then the users can’t collaborate; version tracking is also lost when the network is partitioned. Moreover, people have to buy server space on nodes that provide versioning capabilities to host their projects; our motivation comes from the fact that all the users have enough storage space on their own machines to host their project and even version them. Hence, we propose to remove the central server concept and host the version files on the collaborating user’s own machines. This gives rise to the concept of a distributed versioning system.

Today, some systems already exist that provide distributed versioning capabilities. For example, Mercurial is one such system that offers such a capability. Instead of using one computer as a server, Mercurial uses file storage on all users’ machines, avoiding the central failure point. However, we believe that they still lack in availability.

Consider the following scenario:

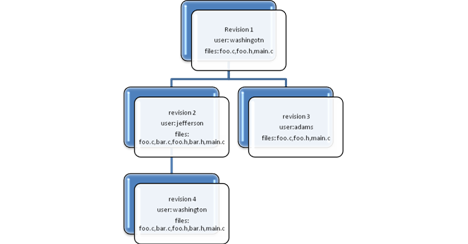

There are two users Alice and Bob that are working on a project (Figure 1). They have the same working head that is “d”. Now Bob commits twice creating “e” and “f” and gets a conflict since Alice has her own working copy. So Bob resolves the conflicts and then finalizes the commit. At this commit, the changes are not pushed out on other users. So, if Bob goes down, or goes offline, then if Alice wants to commit or update, she wouldn’t get the consistent set of files. So the problem here is pushing out commit changes to other users for redundancy, so that Alice has availability in most situations.

Figure 1. Picture from [1]

Volvox is a distributed versioning system that attempts to address this issue.

Volvox has all the features of a distributed versioning system plus it adds more availability to the system with a minor increase in network bandwidth. Also, Volvox allows the project tree to branch as the users commit, and attempts to then merge the branches automatically, or later by users resolving conflicts. Volvox’s distributed nature prevents any single node failure from stopping the network. This makes it more fault-tolerant and less expensive by storing the files in multiple nodes, removing the single point of failure. The project branching provides a much easier workflow than Mercurial type systems; allowing coders to concentrate on coding, while the system merge most files.

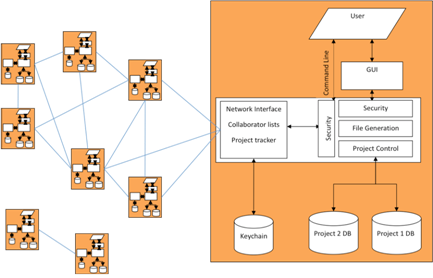

The following diagram (Figure 2) shows the overall architecture of the system; how users communicate with each other. Even in case of a network partition, users can commit and get the latest versions within their partition (with nodes going offline or leaving partitions), and later when partition joins the mainstream network, they merge.

Figure 2. Volvox System View

Quality Attributes/ Functional Requirements

|

Quality Attribute |

Availability |

|

Stimulus |

Not able to get access to revision

details, updates or revision files |

|

Source(s) of the stimulus |

Checkout or update of project |

|

Relevant Environmental Conditions |

Work timing differences or

inter-continental fiber optic cable disruptions |

|

Architectural Elements |

Data Access Logic |

|

System Response |

User able to get latest versions

of files even if all nodes not online. Access to project revision files,

whether or not all project members are online, or node is in a partitioned

network |

|

Response Measure |

All files updated |

|

Functional Requirement |

Portability |

|

Stimulus |

Installing the Volvox software on

a COTS hardware unit fails |

|

Source(s) of the stimulus |

Customer |

|

Relevant Environmental Conditions |

Volvox software is being prepared

for deployment at the customer location |

|

Architectural Elements |

Volvox application software and

hardware |

|

System Response |

Volvox software is loaded on the

device hardware platform |

|

Response Measure |

All application properties and

features are installed in a reasonable period of time |

|

Functional Requirement |

Usability |

|

Stimulus |

User rejects software because of

having to change the way he is used to using a versioning system |

|

Source(s) of the stimulus |

Volvox use cases |

|

Relevant Environmental Conditions |

During initial distribution of

product |

|

Architectural Elements |

User Interface component of Volvox |

|

System Response |

Behavior as expected from a client

server system |

|

Response Measure |

User study showing good acceptance |

|

Quality Attribute |

Modifiability |

|

Stimulus |

Making changes to existing modules

to add/remove functionality effects changes in more than one place |

|

Source(s) of the stimulus |

Developers/Maintainers |

|

Relevant Environmental Conditions |

Adding new functionality or

improving existing functionality |

|

Architectural Elements |

Volvox application components |

|

System Response |

Each functionality is implemented

as a separate module and hence eases modification |

|

Response Measure |

Modification time is as less as

possible |

|

Quality Attribute |

Security |

|

Stimulus |

The files are compromised during

transfer Volvox

assumes that the individual nodes are secure and files need not be hashed |

|

Source(s) of the stimulus |

Volvox application software |

|

Relevant Environmental Conditions |

File transfer |

|

Architectural Elements |

Application Network Interface

Module |

|

System Response |

Files are encrypted during

transfer |

|

Response Measure |

- |

|

Quality Attribute |

Performance |

|

Stimulus |

Response time is too high |

|

Source(s) of the stimulus |

Volvox Use cases |

|

Relevant Environmental Conditions |

During lesser number of users

online |

|

Architectural Elements |

Push/Pull update module |

|

System Response |

Parallel download and update of

files (Prohibited by Number of users

online) |

|

Response Measure |

Action completes in a reasonable

time using minimum resources possible |

|

Functional Requirement |

Flexibility |

|

Stimulus |

Volvox is not able to integrate to

work with existing editors and hence loses market interest |

|

Source(s) of the stimulus |

Promote/Increase product influence

sales by having it as an embeddable plug-in in existing prominent project

editors |

|

Architectural Elements |

Volvox components should have

distinct API’s available and the development has to be modularized. |

|

System Response |

Embed distributed versioning

techniques in |

|

Response Measure |

Ability to include Volvox

versioning techniques in different existing editors ex. Eclipse, Netbeans,

etc. |

|

Quality Attribute |

Scalability |

|

Stimulus |

The response time of the system

reduces |

|

Source(s) of the stimulus |

More users working/joining project |

|

Relevant Environmental Conditions |

|

|

Architectural Elements |

|

|

System Response |

|

|

Response Measure |

Responsiveness not reduced when

more users working at the same |

|

Functional Requirement |

Parallel

Development |

|

Stimulus |

Product not delivered |

|

Source(s) of the stimulus |

2 months development time and

testing time |

|

System Response |

Components Modularized |

|

Response Measure |

Each developing in parallel |

System Design

Architecture (diagrams using ACME Architecture Description

Language - ADL)

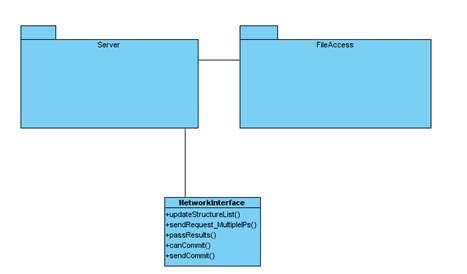

Communication

Architecture: Client Server (Request Response Type)

CSConnT (connector protocol for volvox) - HTTP

Quality attributes & Requirements promoted by Client Server architecture:

- Scalability (Users can join in and start making connections)

- Availability (If one client goes down during file transfer, or any other operation, connections to others can be made using the Coconut protocol)

- Performance (Multiple clients Concurrently making connections)

Node

Architecture: Tiered Architecture (Call Return Type)

Quality attributes & Requirements promoted by Tiered Architecture:

- Modifiability (All 3 components have separate functionality that is accessible through well defined interfaces, and hence changes in one hidden from others)

- Parallel Development (All 3 components have separate functionality that is accessible through well defined interfaces, and hence changes in one hidden from others)

- Flexibility (Modularized components give rise to well defined access API’s that enable software to be integrated with multiple)

Architectural

Elements

- Data Access Logic - Server software and other helper modules

- User Interface

- Project File System

- Connector defining communication protocol

- Ports used for accepting data (and converting formats if required)

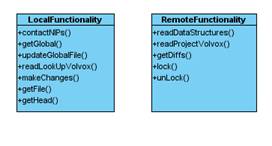

Each node will act as a client as well as a server.

The node behaving as a client should be able to perform the following functions:

1) Checkout project

2) Update project

3) Add Files

4) Delete Files

5) Commit files

6) Work on multiple projects

7) Make SECURE connection to other clients

The node behaving as a server should be able to perform the following functions:

1) Receive connections from other clients

2) Return response for the following requests:

a. Current highest revision number

b. Diff’s (for file changes) between two file versions

c. File metadata

d. Project revision tree

Code Organization - Class

Diagram

1. System View

2. DataAccessLogic Package

3.

ServerPackage

4. FileAccess

Package

Data Structures

Tracker

Maps userid’s to IP addresses to contact them

(In case a user moves, the change is reflected in the tracker at other nodes using the Tracker Update protocol, which can be separately implemented, or as a part of other protocols)

Userid1= xxx.xxx.xxx.xxx

Userid2= xxx.xxx.xxx.xxx

Reverse Lookup

Maps project revision numbers with associated file-id’s

ProjRevsionId1 = fileId1, fileId2, fileId3

ProjRevsionId2 = fileId1, fileId2, fileId4

Project Config file

Will store the project related global constants such as:

- LocalFileNumberCounter

- CurrentRevisionHead

- CurrentBranch

Volvox Config file

Will store the software (Volvox) related global constants such as:

- Port Number

- Mappings of ProjectId to Root directory and Project Name

- userId

Revision Trees

Each project will consist of a project file and multiple file revision tree files. All these file will be stored in a file named “.volvox” at the project root path. The project file is structured as a list of subsequent project revisions, with each entry containing the revision ID, the parent revision ID, and a list of file id/file name pairs altered in this revision. Each file revision tree is structured similarly, with a header specifying file path, and then entries containing a revision id, the parent revision offset, and a set of differences from the parent. The revision id in both files consists of a monotonically increasing number and the username of the author.

Project revision Tree:

Format of 1 Entry -

![]()

Example

ProjectRevId1:0:FileId1|| ProjectRevId2:1:FileId3, FileId4|| ProjectRevId4:1:FileId1|| ProjectRevId3:3:FileId5

The project revision tree is read into memory and remains there for further access.

File Revision Tree:

|

Path = “\foo.c”, creator=”washington” |

||

|

washington1 |

NULL |

Difference string |

|

adams3 |

1 |

Difference string |

|

washington4 |

2 |

Difference string |

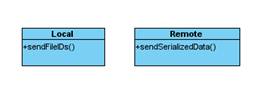

Interactions

& Communication Protocol - Sequence Diagrams

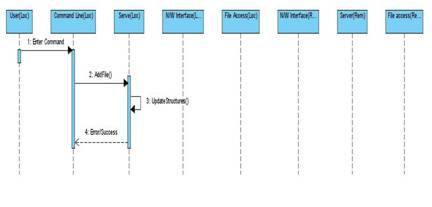

Add File

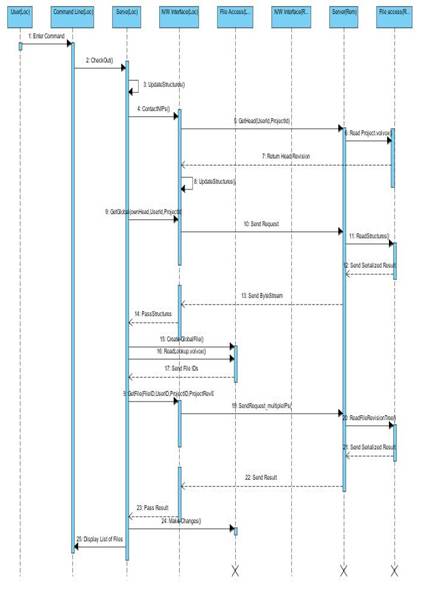

Checkout

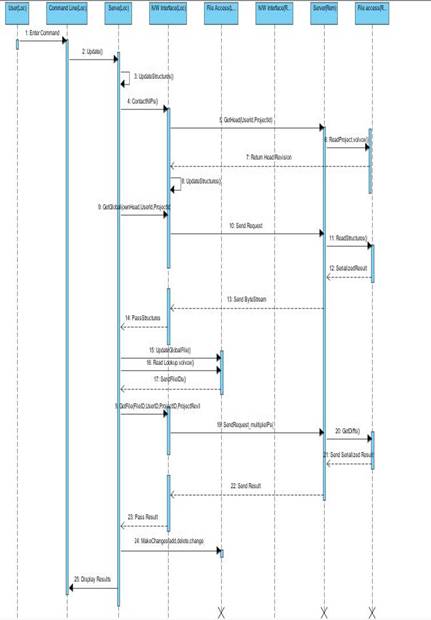

Update

Commit

Synchronization

Demo sequence

The midpoint and final demo will both be preformed with 4 computers running the volvox program.

Midpoint Demo Sequence:

This demo will show the basic functionality of the volvox program, in the presence of a full network and no conflicts. We will assume that the users have downloaded the tracker file for a project and have the Volvox software deployed. These users have already joined the project. After, one user will add a file to the project and commit it. The other three users will update to the new revision, and will make a change to a file. They will then commit the changed file.

Final Demo Sequence:

This demo will show the full volvox functionality, its ability to merge files committed when the network was partitioned, and its ability to handle node failure cases during commit and update. First, one user will create a project with the creation command, and will send the tracker file by email to the other 3 users. These users will use the file to join the project. After, one user will add a file to the project and commit it. The other three users will update to the new revision and then partition the network. One user on each subnet will change the file, and will commit their change. The networks will then be joined, and the users will update, sowing the automerge feature. One user will then create changes to the file, and will start a commit. During the commit, the computer will be disabled, and the network will be forced to recover, showing the failure tolerance of the system. Finally, a file will be created and added to the project by two different people, who will then attempt to commit it. This will show the system’s ability to resolve file name conflicts.

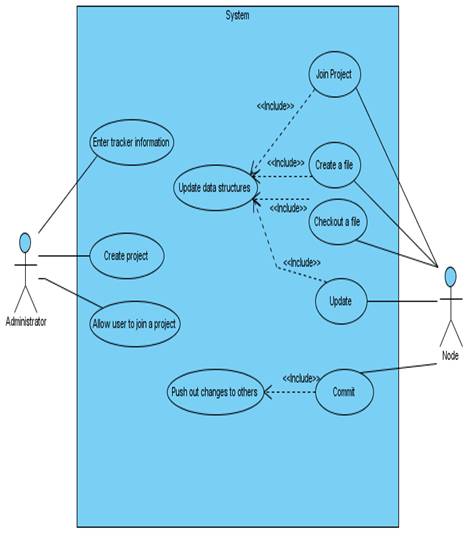

Use Cases

Stretch Goals

File level access rights – Our current design assumes all users working on a given project access all files, this may be true for academic or free software, but larger commercial software is often developed as modules worked on by groups independently. For such projects, it would make sense to provide read and write access at directory or file level.

User specific public/private key encryption – Our current design does not account for malicious users; these users may be part of a project, and try and poison a function, or may be an external actor who simply has acquired the shared key for the project. User specific public keys would insure all changes come from known users, and allow for tracing back a malicious revision to a specific user.

Graphic User Interface – Our current design uses command line interaction with the user; while this is a flexible and powerful way of interacting, it is not friendly for new users. A graphical interface that obscures the command line would improve the user experience.

Schedule And Responsibilities

References

1]http://www.selenic.com/mercurial/wiki/index.cgi/UnderstandingMercurial